Open Data

Introduction

In 2013, IBM estimated that 90% of the world’s computerised data had been collected in the previous two years. As the amount of data available expands dramatically, handling that data becomes a complex issue: how can we use it to its full potential, when the sheer volume makes it so hard to process using traditional means?

The answer might come from crowd-sourced projects. The Wikimedia community has years of experience organising autonomous projects on a grand scale to process enormous amounts of information for the public good. The result has been an information resource of unprecedented scale and accuracy.

Opening big data to the public could bring the power of crowdsourcing to any number of projects, allowing information to be processed effectively and for common benefit. In an age of mass surveillance, the democratisation of big data has important implications for technological ethics.

Featured Speakers

Lydia Pintscher

Barbican Hall: Friday 10:30 – 11:00

"Mainstream and open. We have achieved this for Free Software. Now it is time to do the same for Open Data."

Lydia Pintscher is the product manager for Wikidata, working for Wikimedia Germany. She has been with the project since the beginning in 2012. She started as the community liaison for Wikidata and is now in charge of product direction. Lydia is a member of the board of directors of KDE, the open-source software and technology community, which has delivered over 200 individual projects and has over 5000 contributors.

Follow Lydia Pintscher on Twitter

Markus Krötzsch

Barbican Hall: Friday 11:30 – 12:00

"Wikidata is in its infancy: it just got its first full set of datatypes and is only about to learn to answer to simple queries. Time to do your research if you want to keep up once it starts to run."

Markus Krötzsch is a research group leader at TU Dresden and an architectural advisor to the Wikidata project, which was strongly influenced by his project Semantic MediaWiki. His research in information management and data access is closely related to his open source activities. He is an editor of the W3C Web Ontology Language, an author of several textbooks on semantic technologies, and co-creator of various open source projects, with the WMF-funded Wikidata Toolkit being the most recent one.

You could follow Markus Krötzsch on Twitter but maybe you'd rather follow his publications

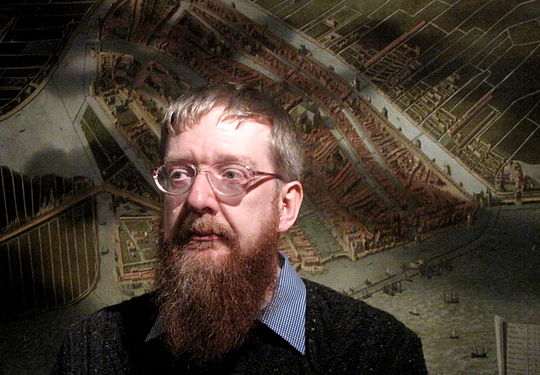

Viktor Mayer-Schönberger

Barbican Hall: Friday 12:00 - 12:30

"Sometimes the constraints that we live with, and presume are the same for everything, are really only functions of the scale in which we operate."

Viktor Mayer-Schönberger is the Oxford Internet Institute's Professor of Internet Governance and Regulation at the Oxford Internet Institute, University of Oxford, and the co-author of Big Data: A Revolution That Will Transform How We Live, Work, and Think (HMH, 2013). He spent ten years on the faculty of Harvard's John F. Kennedy School of Government.

Follow Viktor Mayer-Schönberger on Twitter

Richard Stirling

Barbican Hall: Friday 14:30 - 15:00

"Open data is a tool which can create economic, environmental and societal value around the world."

Since 2008, Richard has been at the heart of transparency and open data initiatives in the UK. Within government, Richard helped shape the current transparency agenda, created data.gov.uk and the world’s first online competition for government data - www.showusabetterway.com. He is now International Director at the Open Data Institute committed to catalysing the evolution of open data culture to create economic, environmental, and social value.

Rufus Pollock

Barbican Hall: Friday 17:00 - 17:30

"Every day we face both personal challenges such as the quickest way to get to the work and global ones such as climate change and how to sustainably feed and educate seven billion people."

Rufus Pollock is co-founder of the Open Knowledge Foundation. He is a Shuttleworth Foundation Fellow, an Associate of the Centre for Intellectual Property and Information Law at the University of Cambridge and a Director of the Open Knowledge Foundation which he co-founded in 2004.

Follow Rufus Pollock on Twitter

Chris Taggart

Barbican Hall: Sunday 15:30 – 16:00

"Our lives are determined by data and open data, particularly open data about companies is about having the power to understand, influence and determine our futures."

Chris Taggart is the Co-founder and CEO of OpenCorporates. Chris Taggart was originally a magazine journalist and publisher, and is an experienced and successful entrepreneur, having started and grown several successful companies. He has been working exclusively in the field of open government and public sector information since 2009. Chris was name in the Top 10 Digital Social Innovators to watch out for by The Guardian.

Follow Chris Taggart on Twitter

Presentations

OpenStreetMap

Wikidata

Just as Wikipedia collects textual information from different kinds of sources, Wikidata does something similar with data. Structured data is entered and maintained collaboratively and autonomously by the community, and published under a free license for all to use: whether they will use it within Wikimedia or beyond.

This is probably the most significant big data project underway in the Wikimedia community. It is completely open; you can get involved right now and start using Wikidata in whatever way interests you. Get involved today!

Wikidata has resulted in machine-readable information that enables users to quickly make sense of the vast amount of data available in Wikimedia projects. It can be hard to imagine what this means until one has seen the effects in action: kinds of knowledge that used to be taken for granted as the preserve of human learning are now being created automatically, using the power of open data. See below for some examples of what Wikidata's data has already been used to build. Many more tools will follow in the next weeks and months.

Creating machine-readable information organised by location and date on all major world events in history Users can query a search engine with terms corresponding to a Wikidata ID assigned to a particular place and/or event, and this tool displays a map and timeline representing key information.

Using contextual data to translate 2D images into 3D models This astonishing tool takes 2D images, and using contextual data on the wikipedia page, transforms it into a 3D model that is tagged with relevant objects in the scene linked in the text. Data sources come not just from Wikipedia itself, but third parties such as google image search.

GeneaWiki displays a family tree for famous people like Johann Sebastian Bach based on relations in Wikidata.

The Wikidata Map Interface visualizes information associated with a geocoordinate. It can for example show you the metro network of major cities.

An open source JavaScript library that looks up and displays the labels of entities marked-up in a Web site in the language of the user, using Wikidata.

Wiri uses data from Wikidata to answer your questions in natural language.

Centralising multi-language information on the same topic Wikipedia editors can use Wikidata to automatically access pages on the same topic in different languages in order to assist research and keep links up to date. Wikidata is still a young project, and many questions remain about how it is to be expanded and used. Anybody can autonomously choose to create applications that enhance or implement Wikidata information.

Open datasets and Wikimedia projects

Besides of structured data, there is another kind of data which is not handled by Wikidata: open datasets. In contrast with the structured-data hosted in Wikidata, static dataset tables do not need to be editable, just versioned as a complete unit. The relationship of open datasets with Wikidata items is akin to the relationship that a source material would have with Wikipedia articles. Sometimes you can extract information from a single source to add it to different articles, but you wouldn’t copy verbatim the whole text, since they represent different levels of information granularity.

Open datasets are still not properly handled by the software of Wikimedia projects, but that is what governments and other open data organizations release in portals like http://datahub.io/ It is being discussed if a kind of “Data-Commons project”, or Commons itself, could store selected datasets as a previous step to either integrate them into Wikidata or to use them directly to create visualizations in Wikipedia. The following tool is being developed with that goal in mind:

Limn is a mediawiki extension that uses Vega to visualize datasets as maps or charts. A visualization in the world of Vega has three parts:

- Data (one or more sets of data, can be geojson, topojson, tsv, csv, layers from a map)

- Transformations on that data (scales, normalization, etc.)

- Marks (visual representations)

Centralised discussion on open datasets.

Getting involved

Attend Wikimania, or consider volunteering!